But capturing high-resolution images using light and electron microscopy is only the first step. The real challenge lies in the evaluation: How can we quickly, accurately, and reproducibly convert a particle image into valid data on particle size and shape?

The status quo: Manual evaluation

In many laboratories, particle images are still evaluated completely by hand. An employee marks each particle on the screen, measures its diameter, and estimates its shape. This is not only monotonous, but also a massive economic bottleneck.

The numbers speak for themselves: an experienced specialist often needs 30 to 60 minutes to accurately measure just 100 particles. If you extrapolate this to a complete test series, the costs and time losses are hardly acceptable in modern production. Regulatory requirements exacerbate the problem, as a minimum number of particle detections (> 1,000) are required for statistical significance in safety assessments. In addition, objectivity suffers because results can vary from employee to employee.

Initial automation: Classic image processing methods

Rule-based algorithms have been developed over decades to replace manual work. These follow a fixed logic and use tools such as:

- Preprocessing: Noise is reduced through background subtraction and various filter methods.

- Isolation: Objects are isolated from the background using thresholding or edge detection.

- Shape optimization: Morphological operations (such as erosion and dilation) smooth particle edges, while contour estimation mathematically describes the outer shape.

- Separation: More complex approaches such as watershed transformation, concave point segmentation, or superpixel segmentation attempt to isolate touching particles from each other.

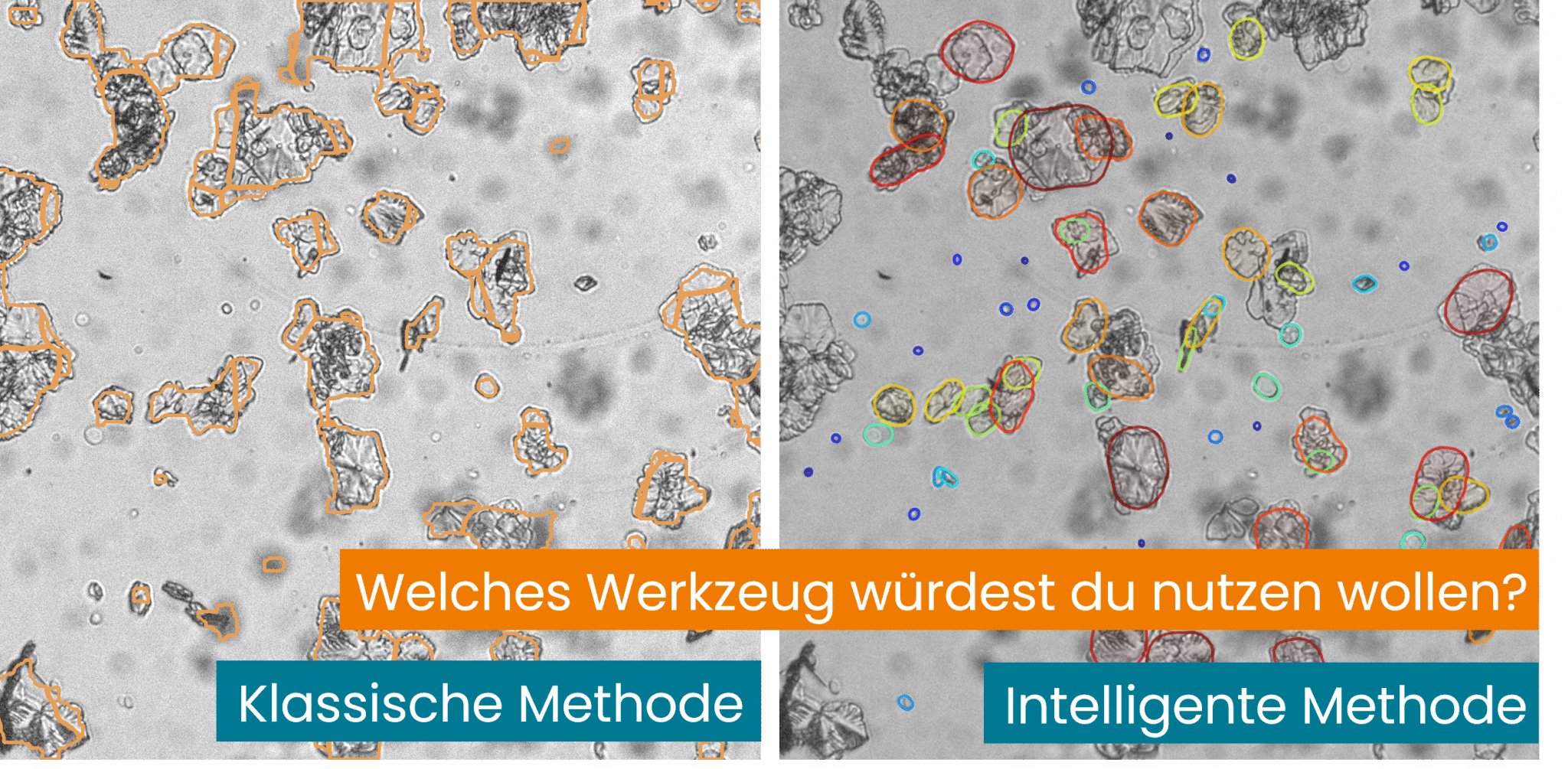

The limit: Despite these tools, classic algorithms often fail when confronted with the reality of the image. As soon as particles overlap significantly or the contrast fluctuates, these methods fail to “understand” the object. They only see light and dark pixel values, not physical objects. The result is incorrect particle size distributions due to incorrectly merged particles. No meaningful evaluation is possible at very high particle densities.

The solution of the future: AI-based object recognition

This is where artificial intelligence (AI) comes in. Unlike rigid, pixel-based logic, a neural network learns from examples.

- Harnessing intelligence: AI recognizes particles even at extremely high densities or with high noise levels because it recognizes complex patterns. It can be taught the properties of particles.

- Time savings: The effort required for the initial training or calibration of the AI quickly pays for itself. While classic algorithms have to be laboriously tuned manually for each new product, a well-trained AI adapts flexibly to new conditions.

- Optimizing training: Yes, AI needs data at the beginning. But modern tools already use pre-trained models that massively accelerate this process. The output is a fully automated analysis in real time.

Conclusion: Efficiency through intelligent automation

The era of manual evaluation and unstable “makeshift solutions” with classic algorithms is coming to an end. For companies that really want to understand particle processes and also implement inline technologies, AI is a prerequisite for scalability.